MORE THAN ONE TRUTH

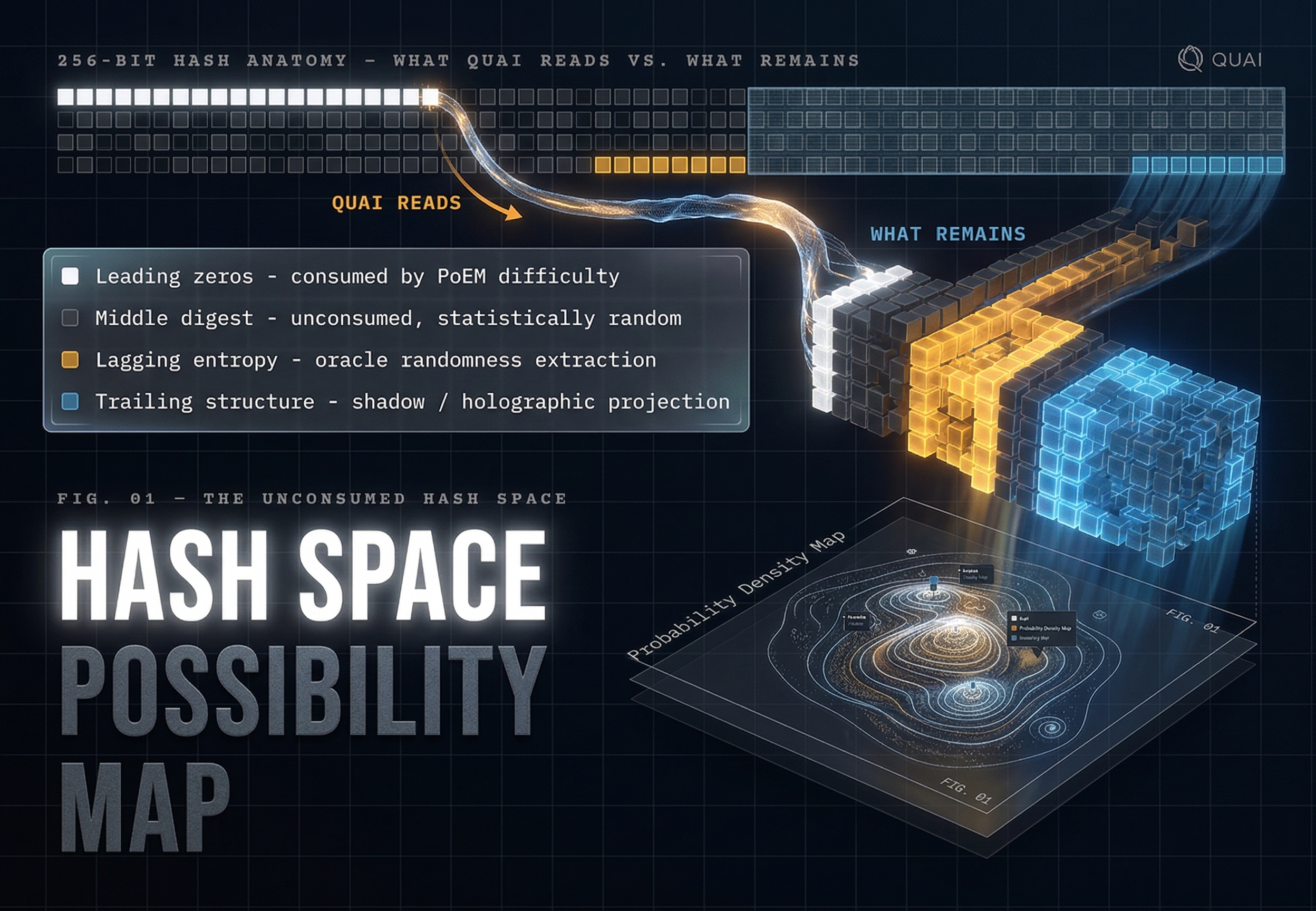

Every valid Quai hash contains potentially many symmetric or asymmetric truths. PoEM consumes the leading zeros. Everything else — the lagging digits, the trailing structure, the middle entropy — is latent possibility space. Same work already paid for. Different readings not yet taken.